The Limit

John Krahn's 2001 Hardy Lecture "The limits of limit equilibrium analyses" describes some limitations of the Limit Equilibrium method:

The limit equilibrium method of slices is based purely on the principle of statics; that is, the summation of moments, vertical forces, and horizontal forces. The method says nothing about strains and displacements, and as a result it does not satisfy displacement compatibility. It is this key piece of missing physics that creates many of the difficulties with the limit equilibrium method1.

25 years later, computational power, modelling techniques, and access to resources for learning how to model more complete physics of slopes has dramatically improved. Still, Limit Equilibrium remains the workhorse of Geotechnical Engineering. I want to understand why.

I believe that better models and better data can help us find gains that lead to more economic, environmentally friendly designs and enable projects that otherwise would not be possible. We want to be conservative in our designs, but not excessively. We want tools that are as simple as the problem allows, but not simpler. My sense is that the variability of Limit Equilibrium practice is itself a poorly characterized risk, and that understanding it is a prerequisite for an assessment of when to use more complex tools. This is the equilibrium I want to solve.

For more of my thoughts about Limit Equilibrium in geotechnical practice, keep reading. Otherwise, please check out Project Simulator! And feel free to contact me if you have anything to say.

Why Do We Engineer Earth?

We need to apply engineering principles to our landscapes, slopes, and rivers because we want something. We engineer to mitigate risk, and risk requires consequence. If there is no consequence or no exposure to the results of a landslide or a flood, there is no reason to engineer against its occurrence2. To say it another way, there could be many reasons to want a slope not to fail.

Along with many reasons not to want a slope to fail, there are many ways to make a slope stay up, and many further ways to calculate whether a given slope will stay up. The workhorse technique for Geotechnical Engineers is Limit Equilibrium analysis.

Factor of Safety

Factors of Safety are a common concept across many engineering domains. It is the ratio of how strong something is, compared to how strong it needs to be:

If the Factor of Safety is greater than 1, the slope is stable; if it is less than 1, the slope is unstable3.

The Factor of Safety is not something that we can measure. It requires using a model of how forces are distributed and applied, and how the material reacts. The core of geotechnical engineering is choosing how to model. By that, I mean choosing some way to represent the incredibly complex natural environment (high-dimensional) in a clear way that allows us to make practical decisions, like calculating a scalar (low-dimensional) Factor of Safety.

Limit Equilibrium Calculation

The most common way to model the stability of a slope in practice is Limit Equilibrium. Many regulations also directly require that a given slope or structure have a calculated Factor of Safety above some threshold value.

Limit Equilibrium analysis is a calculation technique used to estimate Factor of Safety in geotechnics by assuming, roughly speaking, that the landslide moves as a solid block and by calculating the ratio of the forces that resist sliding (soil strength) against the forces that drive movement (gravity)4.

As stated by Krahn1, this misses some of the physics of real landslides. Principally, deformation and displacement compatibility.

In some cases, this missing physics can be hazardous. If a soil's strength changes as it deforms (for example, brittle or strain-softening behavior), Factors of Safety can be significantly overestimated. In practice, one response is to introduce a numerical, but still non-physical, correction for brittleness (for example, the Norwegian sprøhetsforholdet5) or by using residual strength values. These are useful and necessary responses, which are well-reasoned and justified, but feel unsatisfying as broad responses to missing physics rather than direct modeling of it.

We have the computational ability to model much more of this behavior directly. We can use more advanced numerical methods and represent deformation, staged loading, pore pressure changes, and more complex geometry and material responses. But doing so is more complex and often adds cost.

Everyone in practice knows that Limit Equilibrium is a relatively simple model and has limitations. The practical question is when is it worth using a more complex model?

That is harder than it sounds, and sometimes frustrating for someone who enjoys physics and modelling for its own sake. We work within a complicated regulatory framework where the standard of care is still strongly centered on Limit Equilibrium. The accuracy and value of Limit Equilibrium as a tool has to be considered in the context of Factor of Safety-focused decision-making, and broadly within the way we get information about slopes we want to model.

Why Does Limit Equilibrium Modelling Persist?

I think the persistence of Limit Equilibrium is because it is useful, and it is not just one reason.

- Regulation: many design checks are codified around Factor of Safety thresholds.

- Transparency / auditability: the logic is relatively hand-checkable and easier to peer review.

- Speed and cost: Limit Equilibrium makes sensitivity studies and iterations fast.

- Communication and liability: clients and regulators understand Factor of Safety; more advanced numerical model outputs can be harder to explain and defend without specialized expertise.

- Tooling and habits: software availability, training, and standard workflows all reinforce Limit Equilibrium.

- Input uncertainty dominates: better physics does not help as much when stratigraphy, groundwater, and strength parameters are poorly constrained.

While there are many good reasons to use a Limit Equilibrium model, physically, we can do better, and my intuition is that we can improve our designs and decisions by doing so. Through better testing and instrumentation, we can improve our inputs and calibrate our models, but doing so comes with a cost.

Benefit-Cost Equilibrium

The definition of a Benefit-Cost Ratio analysis is another simple, useful, and almost tautological idea, in the same way that the Factor of Safety is:

An action is worth doing when the Benefits are greater than the Costs. Like the Factor of Safety, the difficulty is in the inputs. Deciding what counts as benefit, what counts as cost, and how much uncertainty lies behind those inputs. Formal quantification of costs and benefits is well-developed in risk disciplines like seismic hazard, where insurance pricing, for example, is an exercise in estimating expected loss under Earth Science uncertainty, and setting prices to make the benefits to the insurer worth covering the cost.

The choice of model directly influences this calculation. However, the choice is not Limit Equilibrium or numerical model in isolation. What is important is the operational choice the model enables. How hard will the ground shake? How steep can the slope stand? Should we put our house below it? Modelling is valuable to the extent that it improves the decision.

It is a value-of-information question: is the extra information provided by more complicated models worth the extra cost of obtaining and defending those models?

- The benefit is a more accurate model that could allow for better decision-making, and in some cases, important capital savings through better designs.

- The cost is the extra effort from a more complicated model. Those costs are not only computational. They include additional lab work, more specialized expertise, more time, and sometimes more professional risk when deviating from standard practice.

Limit Equilibrium is used because it is "cheap and good enough". I wonder how true this is. I suspect Limit Equilibrium may be more variable than we hope, and that in some contexts the cost-benefit optimum may be closer to "more complexity" than current practice reflects. If we want to move toward more risk-based decision-making, then we need to understand better the risk introduced by the variability of the typical Limit Equilibrium itself.

Searching for Equilibrium

There is also a big, related question in the background: are regulated Factors of Safety good targets?

From Wightman and Norris writing for the New Zealand Geotechnical Society:

[...]Target FoS are just based on old targets, which are based on still older targets, admittedly with adjustments made over the years based on experience, but with no explicitly expressed mathematical basis, except perhaps for the notion that the FoS should be more than 1 but not too much more than 16

Modern codes, like the Eurocodes7, try to explicitly calibrate their requirements to require designs that are "sufficiently safe as well as economic," where the authors note that safe is a "socially accepted value[s] for the individual risk level related to structural failure"7.

A fairly common referenced level of "acceptable risk" in much geotechnical and structural engineering regulation is that buildings should be safe enough that we expect the chance of an individual dying as a consequence of building failure each year in a given structure should be something like \(10^{-6}\) (one-in-a-million) or \(10^{-5}\) (one-in-ten-thousand) 7 8 9. To give some - very approximate, and potentially almost misleading, but numerically equivalent10 - context this is the same annual risk as smoking between 1 and 10 cigarettes a year 11 or going Backcountry Skiing 2-3 times per year 12. This gets into the territory of what level of risk is "acceptable," and who decides — questions that require ideas from economics, philosophy, and regulation. I'll explore those in Part 2.

This clearly can become quite complicated quite quickly. Still, for me, a tractable first step to understand the best way to model, and make decisions about the reliability of our designs, is to improve the understanding of how variable Limit Equilibrium can be. That helps begin to assess what a Factor of Safety of 1.5 really implies by giving a better sense of the distribution of actual FoS analyses in practice.

I want to start with a case that probably represents a lower, but reasonably well-calibrated degree of variability, which is what I have tried to create in the Project Simulator.

Similar to the previous work of Reid and Fourie's "round robin" (2023), I believe that insight can be gained by comparing how engineers analyze slopes. Where Reid and Fourie focus on the interpretation and calculation of slope stability in a sophisticated manner, I am interested in the inter-engineer variability in additional dimensions. Where engineers choose to place boreholes, draw analysis cross-sections, and interpret stratigraphy and parameters using simpler tools.

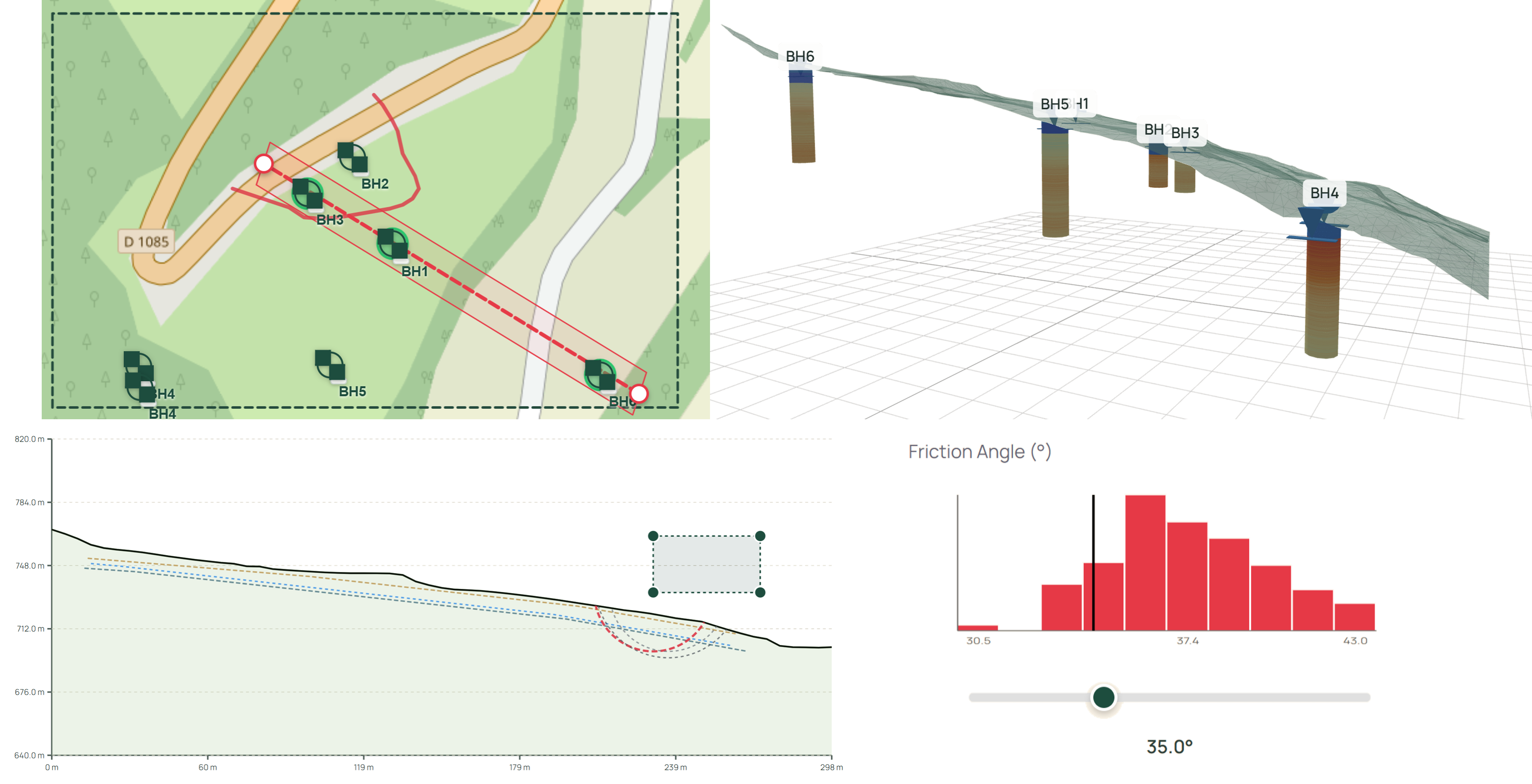

To do this, I developed a constrained workflow that I call the Project Simulator. It uses a synthetic geological model and allows the drilling of boreholes, the cutting of cross-sections, the interpretation of stratigraphy and parameters, and the calculation of Limit Equilibrium stability on a single web page. With enough responses (feel free to share!), I intend to quantify variability across a broader portion of geotechnical practice.

Specific Goals of the Project Simulator

Some specific questions I hope to answer:

-

Investigation Strategy

- Where do people put boreholes, how deep, how many, and in what order?

- How do people engage with information from boreholes? Does looking at sections drive people to add more boreholes?

-

Conceptual Model

- Where do people choose to draw analysis cross-sections?

- How do people interpret stratigraphy, groundwater, from the same limited information?

- How do people choose input parameters when using simpler tools?

-

Model Result

- How do the results of the simple analysis compare with those of more advanced analyses, given access to the true distribution of parameters?

- How do Factors of Safety compare to Probability of Failure or reliability values?

I think there are also potential benefits of the Project Simulator beyond research — potentially as an educational tool to share the core of slope stability analyses to people interested in the process. I hope you find the Project Simulator interesting, or that you share some of my thoughts about Limit Equilibrium.

-

The limits of limit equilibrium analyses. John Krahn. 2001 Hardy Lecture ↩↩

-

Consequence can be a very broad term, and could be potential damage to an ecosystem, a spiritual site, a roadway, or a nuclear energy facility. This does not discount academic study of landslides, which is tremendously useful because it creates knowledge. For example, we might learn something about how landslides work broadly by studying a landslide in the middle of nowhere. ↩

-

In a Newtonian f=ma "objects accelerate when forces are applied" sense. ↩

-

To be slightly more specific (but still imprecise), the expected shape of a landslide is drawn and numerically divided into a set of slices. Each slice is conceptualized as a free body, and forces and moments are balanced across slices. A further explanation can be found in this set of UBC Geological Engineering Course Slides. ↩

-

In Norway, where quick (highly sensitive and brittle) clays are a significant problem, an additional margin of safety is required to account for the fact that LEM does not well model this brittle behaviour. This practice is effectively part of the code, and certainly part of the Standard of Care. The required Factor of Safety if using LEM to calculate stability in quick clay zones is increased by 1.15 times.. i.e. if the required Factor of Safety for your house by the building code is 1.3, the quick clay adjusted requirement is 1.495. ↩

-

Risk-based approach to Factor of Safety selection. Alan Wightman, Naomi Norris. Blog Post ↩

-

Reliability background of the Eurocodes, Joint Research Centre ↩↩↩

-

Town of Canmore, Steep Creek Hazard and Risk Policy, Council Resolution 239-2016, as cited in Holm et al. 2018 ↩

-

Micromorts are a useful intuition pump (1 micromort = 1-in-a-million chance of death), but the numbers are rough and context-dependent. Many quantitative tools exist to estimate the impact of risky activities on our lives, or health, like Quality-adjusted life years. All have flaws because they are models of our very complex values. ↩

-

Smoking one cigarette is 1.4 micromorts Kind of a sketchy Wikipedia claim with a broken link to the source, but widely cited ↩

-

Backcountry Skiing in Canada for a day with normal risk precautions is 4 micromorts ↩